- Jul 19, 2024

-

-

Özgün Özerk authored

Fixes #4960 Configuring `FeeManager` enforces the boundary `Into<[u8; 32]>` for the `AccountId` type. Here is how it works currently: Configuration: ```rust type FeeManager = XcmFeeManagerFromComponents< IsChildSystemParachain<primitives::Id>, XcmFeeToAccount<Self::AssetTransactor, AccountId, TreasuryAccount>, >; ``` `XcmToFeeAccount` struct: ```rust /// A `HandleFee` implementation that simply deposits the fees into a specific on-chain /// `ReceiverAccount`. /// /// It reuses the `AssetTransactor` configured on the XCM executor to deposit fee assets. If /// the `AssetTransactor` returns an error while calling `deposit_asset`, then a warning will be /// logged and the fee burned. pub struct XcmFeeToAccount<AssetTransactor, AccountId, ReceiverAccount>( PhantomData<(AssetTransactor, AccountId, ReceiverAccount)>, ); impl< AssetTransactor: TransactAsset, AccountId: Clone + Into<[u8; 32]>, ReceiverAccount: Get<AccountId>, > HandleFee for XcmFeeToAccount<AssetTransactor, AccountId, ReceiverAccount> { fn handle_fee(fee: Assets, context: Option<&XcmContext>, _reason: FeeReason) -> Assets { deposit_or_burn_fee::<AssetTransactor, _>(fee, context, ReceiverAccount::get()); Assets::new() } } ``` `deposit_or_burn_fee()` function: ```rust /// Try to deposit the given fee in the specified account. /// Burns the fee in case of a failure. pub fn deposit_or_burn_fee<AssetTransactor: TransactAsset, AccountId: Clone + Into<[u8; 32]>>( fee: Assets, context: Option<&XcmContext>, receiver: AccountId, ) { let dest = AccountId32 { network: None, id: receiver.into() }.into(); for asset in fee.into_inner() { if let Err(e) = AssetTransactor::deposit_asset(&asset, &dest, context) { log::trace!( target: "xcm::fees", "`AssetTransactor::deposit_asset` returned error: {:?}. Burning fee: {:?}. \ They might be burned.", e, asset, ); } } } ``` --- In order to use **another** `AccountId` type (for example, 20 byte addresses for compatibility with Ethereum or Bitcoin), one has to duplicate the code as the following (roughly changing every `32` to `20`): ```rust /// A `HandleFee` implementation that simply deposits the fees into a specific on-chain /// `ReceiverAccount`. /// /// It reuses the `AssetTransactor` configured on the XCM executor to deposit fee assets. If /// the `AssetTransactor` returns an error while calling `deposit_asset`, then a warning will be /// logged and the fee burned. pub struct XcmFeeToAccount<AssetTransactor, AccountId, ReceiverAccount>( PhantomData<(AssetTransactor, AccountId, ReceiverAccount)>, ); impl< AssetTransactor: TransactAsset, AccountId: Clone + Into<[u8; 20]>, ReceiverAccount: Get<AccountId>, > HandleFee for XcmFeeToAccount<AssetTransactor, AccountId, ReceiverAccount> { fn handle_fee(fee: XcmAssets, context: Option<&XcmContext>, _reason: FeeReason) -> XcmAssets { deposit_or_burn_fee::<AssetTransactor, _>(fee, context, ReceiverAccount::get()); XcmAssets::new() } } pub fn deposit_or_burn_fee<AssetTransactor: TransactAsset, AccountId: Clone + Into<[u8; 20]>>( fee: XcmAssets, context: Option<&XcmContext>, receiver: AccountId, ) { let dest = AccountKey20 { network: None, key: receiver.into() }.into(); for asset in fee.into_inner() { if let Err(e) = AssetTransactor::deposit_asset(&asset, &dest, context) { log::trace!( target: "xcm::fees", "`AssetTransactor::deposit_asset` returned error: {:?}. Burning fee: {:?}. \ They might be burned.", e, asset, ); } } } ``` --- This results in code duplication, which can be avoided simply by relaxing the trait enforced by `XcmFeeToAccount`. In this PR, I propose to introduce a new trait called `IntoLocation` to be able to express both `Into<[u8; 32]>` and `Into<[u8; 20]>` should be accepted (and every other `AccountId` type as long as they implement this trait). Currently, `deposit_or_burn_fee()` function converts the `receiver: AccountId` to a location. I think converting an account to `Location` should not be the responsibility of `deposit_or_burn_fee()` function. This trait also decouples the conversion of `AccountId` to `Location`, from `deposit_or_burn_fee()` function. And exposes `IntoLocation` trait. Thus, allowing everyone to come up with their `AccountId` type and make it compatible for configuring `FeeManager`. --- Note 1: if there is a better file/location to put `IntoLocation`, I'm all ears Note 2: making `deposit_or_burn_fee` or `XcmToFeeAccount` generic was not possible from what I understood, due to Rust currently do not support a way to express the generic should implement either `trait A` or `trait B` (since the compiler cannot guarantee they won't overlap). In this case, they are `Into<[u8; 32]>` and `Into<[u8; 20]>`. See [this](https://github.com/rust-lang/rust/issues/20400) and [this](https://github.com/rust-lang/rfcs/pull/1672#issuecomment-262152934). Note 3: I should also submit a PR to `frontier` that implements `IntoLocation` for `AccountId20` if this PR gets accepted. ### Summary this new trait: - decouples the conversion of `AccountId` to `Location`, from `deposit_or_burn_fee()` function - makes `XcmFeeToAccount` accept every possible `AccountId` type as long as they they implement `IntoLocation` - backwards compatible - keeps the API simple and clean while making it less restrictive @franciscoaguirre and @gupnik are already aware of the issue, so tagging them here for visibility. --------- Co-authored-by:Francisco Aguirre <franciscoaguirreperez@gmail.com> Co-authored-by:

Branislav Kontur <bkontur@gmail.com> Co-authored-by:

Adrian Catangiu <adrian@parity.io> Co-authored-by: command-bot <>

-

- Jul 18, 2024

-

-

Juan Ignacio Rios authored

v3 PalletInfo had the fields public, but not v4. Any reason why? I need the PalletInfo fields public so I can read the values and do some logic based on that at Polimec @franciscoaguirre If this could be backported would be highly appreciated

Adrian Catangiu <adrian@parity.io> Co-authored-by:

Oliver Tale-Yazdi <oliver.tale-yazdi@parity.io>

-

- Jul 17, 2024

-

-

Tom authored

Update the stake.plus bootnode addresses --------- Co-authored-by:Oliver Tale-Yazdi <oliver.tale-yazdi@parity.io>

-

s0me0ne-unkn0wn authored

Closes #4951 (hopefully) @alvicsam can you please check if it passes in the new environment?

-

- Jul 16, 2024

-

-

Andrei Eres authored

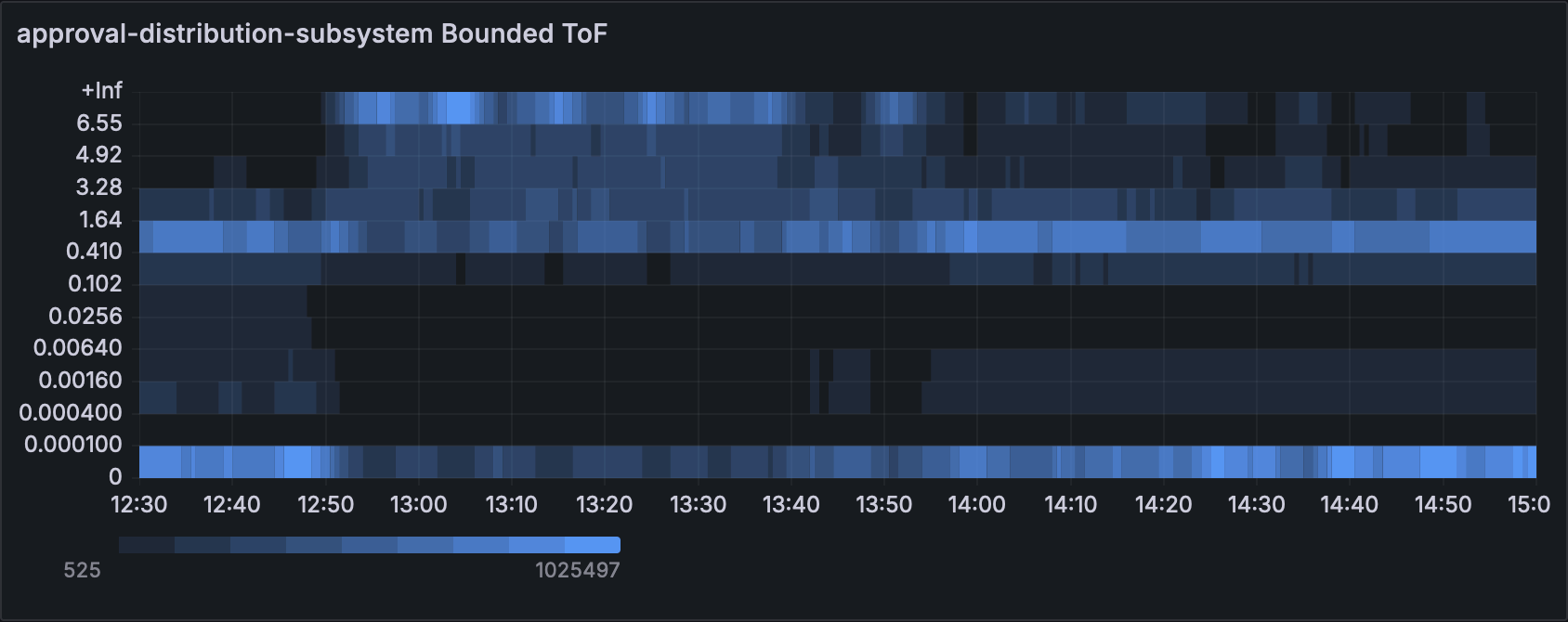

Closes https://github.com/paritytech/polkadot-sdk/issues/577 ### Changed - `orchestra` updated to 0.4.0 - `PeerViewChange` sent with high priority and should be processed first in a queue. - To count them in tests added tracker to TestSender and TestOverseer. It acts more like a smoke test though. ### Testing on Versi The changes were tested on Versi with two objectives: 1. Make sure the node functionality does not change. 2. See how the changes affect performance. Test setup: - 2.5 hours for each case - 100 validators - 50 parachains - validatorsPerCore = 2 - neededApprovals = 100 - nDelayTranches = 89 - relayVrfModuloSamples = 50 During the test period, all nodes ran without any crashes, which satisfies the first objective. To estimate the change in performance we used ToF charts. The graphs show that there are no spikes in the top as before. This proves that our hypothesis is correct. ### Normalized charts with ToF  [Before](https://grafana.teleport.parity.io/goto/ZoR53ClSg?orgId=1)  [After](https://grafana.teleport.parity.io/goto/6ux5qC_IR?orgId=1) ### Conclusion The prioritization of subsystem messages reduces the ToF of the networking subsystem, which helps faster propagation of gossip messages.

-

Andrei Eres authored

A baseline for the statement-distribution regression test was set only in the beginning and now we see that the actual values a bit lower. <img width="1001" alt="image" src="https://github.com/user-attachments/assets/40b06eec-e38f-43ad-b437-89eca502aa66"> [Source](https://paritytech.github.io/polkadot-sdk/bench/statement-distribution-regression-bench)

-

Alexandru Gheorghe authored

This is part of the work to further optimize the approval subsystems, if you want to understand the full context start with reading https://github.com/paritytech/polkadot-sdk/pull/4849#issue-2364261568, however that's not necessary, as this change is self-contained and nodes would benefit from it regardless of subsequent changes landing or not. While testing with 1000 validators I found out that the logic for determining the validators an assignment should be gossiped to is taking a lot of time, because it always iterated through all the peers, to determine which are X and Y neighbours and to which we should randomly gossip(4 samples). This could be actually optimised, so we don't have to iterate through all peers for each new assignment, by fetching the list of X and Y peer ids from the topology first and then stopping the loop once we took the 4 random samples. With this improvements we reduce the total CPU time spent in approval-distribution w...

-

- Jul 15, 2024

-

-

Jun Jiang authored

This should remove nearly all usage of `sp-std` except: - bridge and bridge-hubs - a few of frames re-export `sp-std`, keep them for now - there is a usage of `sp_std::Writer`, I don't have an idea how to move it Please review proc-macro carefully. I'm not sure I'm doing it the right way. Note: need `/bot fmt` --------- Co-authored-by:Bastian Köcher <git@kchr.de> Co-authored-by: command-bot <>

-

Jun Jiang authored

It says `Will be removed after July 2023` but that's not true

Oliver Tale-Yazdi <oliver.tale-yazdi@parity.io> Co-authored-by:

Bastian Köcher <git@kchr.de>

-

- Jul 12, 2024

-

-

Andrei Eres authored

Part of https://github.com/paritytech/polkadot-sdk/issues/4334

-

Bastian Köcher authored

This improves logging in the xcm-executor to have better debugability when executing a XCM message.

-

- Jul 10, 2024

-

-

James Wilson authored

Enabling this feature when building the `polkadot ` crate will lead it to being enabled for the builtin westend and rococo runtimes. The result of that is that a merkleized metadata hash will be computed (at some time cost) in those runtimes, which will allow transactions which include a hash via the `CheckMetadataHash` extension to work. The idea is that this is useful for being able to test/experiment with the `CheckMetadataHash` extension against local nodes. --------- Co-authored-by: command-bot <> Co-authored-by:Bastian Köcher <git@kchr.de>

-

- Jul 09, 2024

-

-

Francisco Aguirre authored

It was added to v4 and v3 but was missing from v2

-

Alin Dima authored

The update is tracked by: https://github.com/paritytech/polkadot-sdk/issues/3699 However, this is not worth doing at this point since it will change in the future for phase 2 of the implementation. Still, it's useful to let people know that the information is not the most up to date.

-

Serban Iorga authored

`polkadot-parachain` simplifications and deduplications Details in the commit messages. Just copy-pasting the last commit description since it introduces the biggest changes: ``` Implement a more structured way to define a node spec - use traits instead of bounds for `rpc_ext_builder()`, `build_import_queue()`, `start_consensus()` - add a `NodeSpec` trait for defining the specifications of a node - deduplicate the code related to building a node's components / starting a node ``` The other changes are much smaller, most of them trivial and are isolated in separate commits. -

Or Grinberg authored

Fixes #3770 Added `clear_origin` as an allowed command after commands that load the holdings register, in the safe xcm builder. Checklist - [x] My PR includes a detailed description as outlined in the "Description" section above - [x] My PR follows the [labeling requirements](https://github.com/paritytech/polkadot-sdk/blob/master/docs/contributor/CONTRIBUTING.md#Process) of this project (at minimum one label for T required) - [x] I have made corresponding changes to the documentation (if applicable) - [x] I have added tests that prove my fix is effective or that my feature works (if applicable) --------- Co-authored-by:

Francisco Aguirre <franciscoaguirreperez@gmail.com> Co-authored-by:

gupnik <mail.guptanikhil@gmail.com>

-

- Jul 08, 2024

-

-

Egor_P authored

This PR backports regular version bumps and prdocs reordering from the 1.14.0 release branch to master

-

- Jul 05, 2024

-

-

Sebastian Kunert authored

Part of #3168 On top of #3568 ### Changes Overview - Introduces a new collator variant in `cumulus/client/consensus/aura/src/collators/slot_based/mod.rs` - Two tasks are part of that module, one for block building and one for collation building and submission. - Introduces a new variant of `cumulus-test-runtime` which has 2s slot duration, used for zombienet testing - Zombienet tests for the new collator **Note:** This collator is considered experimental and should only be used for testing and exploration for now. ### Comparison with `lookahead` collator - The new variant is slot based, meaning it waits for the next slot of the parachain, then starts authoring - The search for potential parents remains mostly unchanged from lookahead - As anchor, we use the current best relay parent - In general, the new collator tends to be anchored to one relay parent earlier. `lookahead` generally waits for a new relay block to arrive before it attempts to build a block. ...

-

- Jul 03, 2024

-

-

Axay Sagathiya authored

**Backable Candidate**: If a candidate receives enough supporting Statements from the Parachain Validators currently assigned, that candidate is considered backable. **Backed Candidate**: A Backable Candidate noted in a relay-chain block --- When the candidate backing subsystem receives the `GetBackedCandidates` message, it sends back **backable** candidates, not **backed** candidates. So we should rename this message to `GetBackableCandidates` Co-authored-by:Bastian Köcher <git@kchr.de>

-

Serban Iorga authored

Related to https://github.com/paritytech/polkadot-sdk/issues/4523 Extracting part of https://github.com/paritytech/polkadot-sdk/pull/1903 (credits to @Lederstrumpf for the high-level strategy), but also introducing significant adjustments both to the approach and to the code. The main adjustment is the fact that the `ForkVotingProof` accepts only one vote, compared to the original version which accepted a `vec![]`. With this approach more calls are needed in order to report multiple equivocated votes on the same commit, but it simplifies a lot the checking logic. We can add support for reporting multiple signatures at once in the future. There are 2 things that are missing in order to consider this issue done, but I would propose to do them in a separate PR since this one is already pretty big: - benchmarks/computing a weight for the new extrinsic (this wasn't present in https://github.com/paritytech/polkadot-sdk/pull/1903 either) - exposing an API for generating the ancestry proof. I'm not sure if we should do this in the Mmr pallet or in the Beefy pallet Co-authored-by:

Robert Hambrock <roberthambrock@gmail.com> --------- Co-authored-by:

Adrian Catangiu <adrian@parity.io>

-

Alexandru Gheorghe authored

With random connectivity and latency is hard to actually figure it out a delta in the benchmarking, so disable them in order to get full deterministic behaviour when measuring performance. At least on my machine with this configuration the results for approval-throughput are really similar between subsequent runs: ``` CPU usage, seconds total per block approval-distribution 36.9025 3.6902 approval-distribution 36.7579 3.6758 approval-distribution 37.0418 3.7042 approval-distribution 37.0339 3.7034 approval-distribution 36.9342 3.6934 approval-distribution 36.7177 3.6718 approval-voting 52.7756 5.2776 approval-voting 52.5999 5.2600 approval-voting 53.2158 5.3216 approval-voting 53.2493 5.3249 approval-voting 52.8524 5.2852 approval-voting 52.8611 5.2861 approval-voting 52.8210 5.2821 ``` --------- Signed-off-by:Alexandru Gheorghe <alexandru.gheorghe@parity.io>

-

- Jun 27, 2024

-

-

Niklas Adolfsson authored

Partly fixes https://github.com/paritytech/polkadot-sdk/pull/4890#discussion_r1655548633 Still the offchain API needs to be updated to hyper v1.0 and I opened an issue for it, it's using low-level http body features that have been removed

-

- Jun 26, 2024

-

-

Muharem Ismailov authored

Introduce an optional auto-increment setup for the IDs of new assets. --------- Co-authored-by:

joe petrowski <25483142+joepetrowski@users.noreply.github.com> Co-authored-by:

Bastian Köcher <git@kchr.de>

-

Anton Vilhelm Ásgeirsson authored

Enables the `request_revenue` and `notify_revenue` parts of [RFC 5 - Coretime Interface](https://polkadot-fellows.github.io/RFCs/approved/0005-coretime-interface.html) TODO: - [x] Finish first pass at implementation - [x] ~~Need to explicitly burn uncollected and dropped revenue~~ Accumulate it instead - [x] Confirm working on zombienet - [x] Tests - [ ] Enable XCM `request_revenue` sending on Coretime chain on Kusama and Polkadot Fixes: #2209 --------- Co-authored-by:

Dmitry Sinyavin <dmitry.sinyavin@parity.io> Co-authored-by: command-bot <> Co-authored-by:

s0me0ne-unkn0wn <48632512+s0me0ne-unkn0wn@users.noreply.github.com> Co-authored-by:

Dónal Murray <donal.murray@parity.io> Co-authored-by:

Bastian Köcher <git@kchr.de>

-

Branislav Kontur authored

Closes: https://github.com/paritytech/polkadot-sdk/issues/4298 This PR also merges `xcm-fee-payment-runtime-api` module to the `xcm-runtime-api`. ## TODO - [x] rename `convert` to `convert_location` and add new one `convert_account` (opposite direction) - [x] add to the all testnet runtimes - [x] check polkadot-js if supports that automatically or if needs to be added manually https://github.com/polkadot-js/api/pull/5917 - [ ] backport/patch for fellows and release (asap) ## Open questions - [x] should we merge `xcm-runtime-api` and `xcm-fee-payment-runtime-api` to the one module `xcm-runtime-api` ? ## Usage Input: - `location: VersionedLocation` Output: - account_id bytes  --------- Co-authored-by:Bastian Köcher <git@kchr.de>

-

- Jun 25, 2024

-

-

yjh authored

Some primitives have impl Hex related traits enabled by `rustc-hex` feature. People wanna use H256/H160 maybe need these trait impls --------- Co-authored-by: command-bot <> Co-authored-by:Bastian Köcher <git@kchr.de>

-

Andrei Eres authored

-

- Jun 24, 2024

-

-

dashangcun authored

Signed-off-by:

dashangcun <jchaodaohang@foxmail.com> Co-authored-by:

dashangcun <jchaodaohang@foxmail.com>

-

Muharem Ismailov authored

Remove unused config parameters `ApproveOrigin` and `OnSlash` from the treasury pallet. Add `OnSlash` config parameter to the bounties and tips pallets. part of https://github.com/paritytech/polkadot-sdk/issues/3800

-

Muharem Ismailov authored

Configuration for the maximum member count per rank, with the option for no limit.

-

Oliver Tale-Yazdi authored

After preparing in https://github.com/paritytech/polkadot-sdk/pull/4633, we can lift also all internal dependencies up to the workspace. This does not actually change anything, but uses `workspace = true` for all dependencies. You can check it with: ```bash git checkout -q $(git merge-base oty-lift-all-deps origin/master) cargo tree -e features > master.out git checkout -q oty-lift-all-deps cargo tree -e features > new.out diff master.out new.out ``` It did not yet lift 100% of dependencies, some inside of `target.*` or some that had conflicting aliases introduced recently. But i will do these together in a follow-up with CI checks. Can be reproduced with [zepter](https://github.com/ggwpez/zepter/): `zepter transpose d lift-to-workspace "regex:.*" --version-resolver highest --skip-package "polkadot-sdk" --ignore-errors --fix`. --------- Signed-off-by:Oliver Tale-Yazdi <oliver.tale-yazdi@parity.io>

-

- Jun 23, 2024

-

-

girazoki authored

Currently the `Initialize` and `Reinitialize` messages in the collationGeneration subsystem fail if: - `Initialize` if there exists already another configuration and - `Reinitialize` if another configuration does not exist I propose to instead change the behaviour of `Reinitialize` to always set the config regardless of whether one exists or not. --------- Co-authored-by:

Bastian Köcher <git@kchr.de> Co-authored-by:

Andrei Sandu <54316454+sandreim@users.noreply.github.com>

-

- Jun 19, 2024

-

-

Tsvetomir Dimitrov authored

Implements most of https://github.com/paritytech/polkadot-sdk/issues/1797 Core sharing (two parachains or more marachains scheduled on the same core with the same `PartsOf57600` value) was not working correctly. The expected behaviour is to have Backed and Included event in each block for the paras sharing the core and the paras should take turns. E.g. for two cores we expect: Backed(a); Included(a)+Backed(b); Included(b)+Backed(a); etc. Instead of this each block contains just one event and there are a lot of gaps (blocks w/o events) during the session. Core sharing should also work when collators are building collations ahead of time TODOs: - [x] Add a zombienet test verifying that the behaviour mentioned above works. - [x] prdoc --------- Co-authored-by:alindima <alin@parity.io>

-

- Jun 18, 2024

-

-

Tin Chung authored

# ISSUE - Link to the issue: https://github.com/paritytech/polkadot-sdk/issues/3800 # Deliverables - [x] remove deprecated calls; (https://github.com/paritytech/polkadot-sdk/pull/3820/commits/d579b673) - [x] set explicit coded indexes for Error and Event enums, remove unused variants and keep the same indexes for the rest; (https://github.com/paritytech/polkadot-sdk/pull/3820/commits/d579b673) - [x] remove unused Config's type parameters; (https://github.com/paritytech/polkadot-sdk/pull/3820/commits/d579b673) - [x] remove irrelevant tests and adopt relevant using old api; (https://github.com/paritytech/polkadot-sdk/pull/3820/commits/d579b673) - [x] remove benchmarks for removed calls; (https://github.com/paritytech/polkadot-sdk/pull/3820/commits/1a3d5f1f) - [x] prdoc (https://github.com/paritytech/polkadot-sdk/pull/3820/commits/d579b673) - [x] remove deprecated methods from the `treasury/README.md` and add up-to-date dispatchable functions documentation (https://github.com/paritytech/polkadot-sdk/pull/3820/commits/d579b673) - [x] remove deprecated weight functions (https://github.com/paritytech/polkadot-sdk/pull/3820/commits/8f74134b) > ### Separated to other issues > - [ ] remove storage items like Proposals and ProposalCount, that are not used anymore Adjust all treasury pallet instances within polkadot-sdk - [x] `pallet_bounty`, `tip`, `child_bounties`: https://github.com/openguild-labs/polkadot-sdk/pull/3 - [x] Remove deprecated treasury weight functions used in Westend and Rococo runtime `collective-westend`, `collective-rococo` Add migration for westend and rococo to clean the data from removed storage items - [ ] https://github.com/paritytech/polkadot-sdk/pull/3828 # Test Outcomes Successful tests by running `cargo test --features runtime-benchmarks` ``` running 38 tests test tests::__construct_runtime_integrity_test::runtime_integrity_tests ... ok test benchmarking::benchmarks::bench_check_status ... ok test benchmarking::benchmarks::bench_payout ... ok test benchmarking::benchmarks::bench_spend_local ... ok test tests::accepted_spend_proposal_enacted_on_spend_period ... ok test benchmarking::benchmarks::bench_spend ... ok test tests::accepted_spend_proposal_ignored_outside_spend_period ... ok test benchmarking::benchmarks::bench_void_spend ... ok test benchmarking::benchmarks::bench_remove_approval ... ok test tests::genesis_funding_works ... ok test tests::genesis_config_works ... ok test tests::inexistent_account_works ... ok test tests::minting_works ... ok test tests::check_status_works ... ok test tests::payout_retry_works ... ok test tests::pot_underflow_should_not_diminish ... ok test tests::remove_already_removed_approval_fails ... ok test tests::spend_local_origin_permissioning_works ... ok test tests::spend_valid_from_works ... ok test tests::spend_expires ... ok test tests::spend_works ... ok test tests::test_genesis_config_builds ... ok test tests::spend_payout_works ... ok test tests::spend_local_origin_works ... ok test tests::spend_origin_works ... ok test tests::spending_local_in_batch_respects_max_total ... ok test tests::spending_in_batch_respects_max_total ... ok test tests::try_state_proposals_invariant_2_works ... ok test tests::try_state_proposals_invariant_1_works ... ok test tests::try_state_spends_invariant_2_works ... ok test tests::try_state_spends_invariant_1_works ... ok test tests::treasury_account_doesnt_get_deleted ... ok test tests::try_state_spends_invariant_3_works ... ok test tests::unused_pot_should_diminish ... ok test tests::void_spend_works ... ok test tests::try_state_proposals_invariant_3_works ... ok test tests::max_approvals_limited ... ok test benchmarking::benchmarks::bench_on_initialize_proposals ... ok test result: ok. 38 passed; 0 failed; 0 ignored; 0 measured; 0 filtered out; finished in 0.08s Doc-tests pallet_treasury running 2 tests test substrate/frame/treasury/src/lib.rs - (line 52) ... ignored test substrate/frame/treasury/src/lib.rs - (line 79) ... ignored test result: ok. 0 passed; 0 failed; 2 ignored; 0 measured; 0 filtered out; finished in 0.00s ``` polkadot address: 19nSqFQorfF2HxD3oBzWM3oCh4SaCRKWt1yvmgaPYGCo71J

-

- Jun 17, 2024

-

-

Kantapat chankasem authored

this pr is a part of #3326 --------- Co-authored-by:

Kian Paimani <5588131+kianenigma@users.noreply.github.com> Co-authored-by:

Bastian Köcher <git@kchr.de>

-

- Jun 13, 2024

-

-

Egor_P authored

This PR backports regular version bumps and prdocs reordering from the release branch back to master

-

Branislav Kontur authored

This PR aligns the settings for `MaxFreezes`, `RuntimeFreezeReason`, and `FreezeIdentifier`. #### Future work and improvements https://github.com/paritytech/polkadot-sdk/issues/2997 (remove `MaxFreezes` and `FreezeIdentifier`)

-

- Jun 11, 2024

-

-

cheme authored

This branch propose to avoid clones in append by storing offset and size in previous overlay depth. That way on rollback we can just truncate and change size of existing value. To avoid copy it also means that : - append on new overlay layer if there is an existing value: create a new Append entry with previous offsets, and take memory of previous overlay value. - rollback on append: restore value by applying offsets and put it back in previous overlay value - commit on append: appended value overwrite previous value (is an empty vec as the memory was taken). offsets of commited layer are dropped, if there is offset in previous overlay layer they are maintained. - set value (or remove) when append offsets are present: current appended value is moved back to previous overlay value with offset applied and current empty entry is overwrite (no offsets kept). The modify mechanism is not needed anymore. This branch lacks testing and break some existing genericity (bit of duplicated code), but good to have to check direction. Generally I am not sure if it is worth or we just should favor differents directions (transients blob storage for instance), as the current append mechanism is a bit tricky (having a variable length in first position means we sometime need to insert in front of a vector). Fix #30. --------- Signed-off-by:

Alexandru Vasile <alexandru.vasile@parity.io> Co-authored-by:

EgorPopelyaev <egor@parity.io> Co-authored-by:

Alexandru Vasile <60601340+lexnv@users.noreply.github.com> Co-authored-by:

Bastian Köcher <git@kchr.de> Co-authored-by:

Oliver Tale-Yazdi <oliver.tale-yazdi@parity.io> Co-authored-by:

joe petrowski <25483142+joepetrowski@users.noreply.github.com> Co-authored-by:

Liam Aharon <liam.aharon@hotmail.com> Co-authored-by:

Kian Paimani <5588131+kianenigma@users.noreply.github.com> Co-authored-by:

Branislav Kontur <bkontur@gmail.com> Co-authored-by:

Bastian Köcher <info@kchr.de> Co-authored-by:

Sebastian Kunert <skunert49@gmail.com>

-

- Jun 10, 2024

-

-

Alexandru Gheorghe authored

... this is superfluous because set_reserved_peers implementation already calls this method here: https://github.com/paritytech/polkadot-sdk/blob/cdb297b1 /substrate/client/network/src/protocol_controller.rs#L571, so the call just ends producing this warnings whenever we manipulate the peers set. ``` Trying to remove unknown reserved node 12D3KooWRCePWvHoBbz9PSkw4aogtdVqkVDhiwpcHZCqh4hdPTXC from SetId(3) peerset warnings (from different peers) ``` Signed-off-by:

Alexandru Gheorghe <alexandru.gheorghe@parity.io>

-

Alexandru Gheorghe authored

Add some debug logs to be able to identify the validators and parachains that have most no-shows, this metric is valuable because it will help us identify validators and parachains that regularly have this problem. From the validator_index we can then query the on-chain information and identify the exact validator that is causing the no-shows. --------- Signed-off-by:Alexandru Gheorghe <alexandru.gheorghe@parity.io>

-