- Apr 03, 2024

-

-

Andrei Sandu authored

fixes https://github.com/paritytech/polkadot-sdk/issues/3775 Additionally moves the claim queue fetch utilities into `subsystem-util`. TODO: - [x] fix tests - [x] add elastic scaling tests --------- Signed-off-by:

Andrei Sandu <[email protected]>

-

- Apr 02, 2024

-

-

Serban Iorga authored

Working towards migrating the `parity-bridges-common` repo inside `polkadot-sdk`. This PR upgrades some dependencies in order to align them with the versions used in `parity-bridges-common` Related to https://github.com/paritytech/parity-bridges-common/issues/2538

-

- Apr 01, 2024

-

-

Alexandru Gheorghe authored

Runtime release 1.2 includes bumping of the ParachainHost APIs up to v10, so let's move all the released APIs out of vstaging folder, this PR does not include any logic changes only renaming of the modules and some moving around. Signed-off-by:Alexandru Gheorghe <[email protected]>

-

Alexandru Gheorghe authored

The metric records the current protocol_version of the validator that just connected with the peer_map.len(), which contains all peers that connected, that has the effect the metric will be wrong since it won't tell us how many peers we have connected per version because it will always record the total number of peers Fix this by counting by version inside peer_map, additionally because that might be a bit heavier than len(), publish it only on-active leaves. --------- Signed-off-by:Alexandru Gheorghe <[email protected]>

-

- Mar 31, 2024

-

-

Bastian Köcher authored

Closes: https://github.com/paritytech/polkadot-sdk/issues/3906

-

- Mar 27, 2024

-

-

Andrei Sandu authored

This works only for collators that implement the `collator_fn` allowing `collation-generation` subsystem to pull collations triggered on new heads. Also enables `request_v2::CollationFetchingResponse::CollationWithParentHeadData` for test adder/undying collators. TODO: - [x] fix tests - [x] new tests - [x] PR doc --------- Signed-off-by:Andrei Sandu <[email protected]>

-

- Mar 26, 2024

-

-

Andrei Eres authored

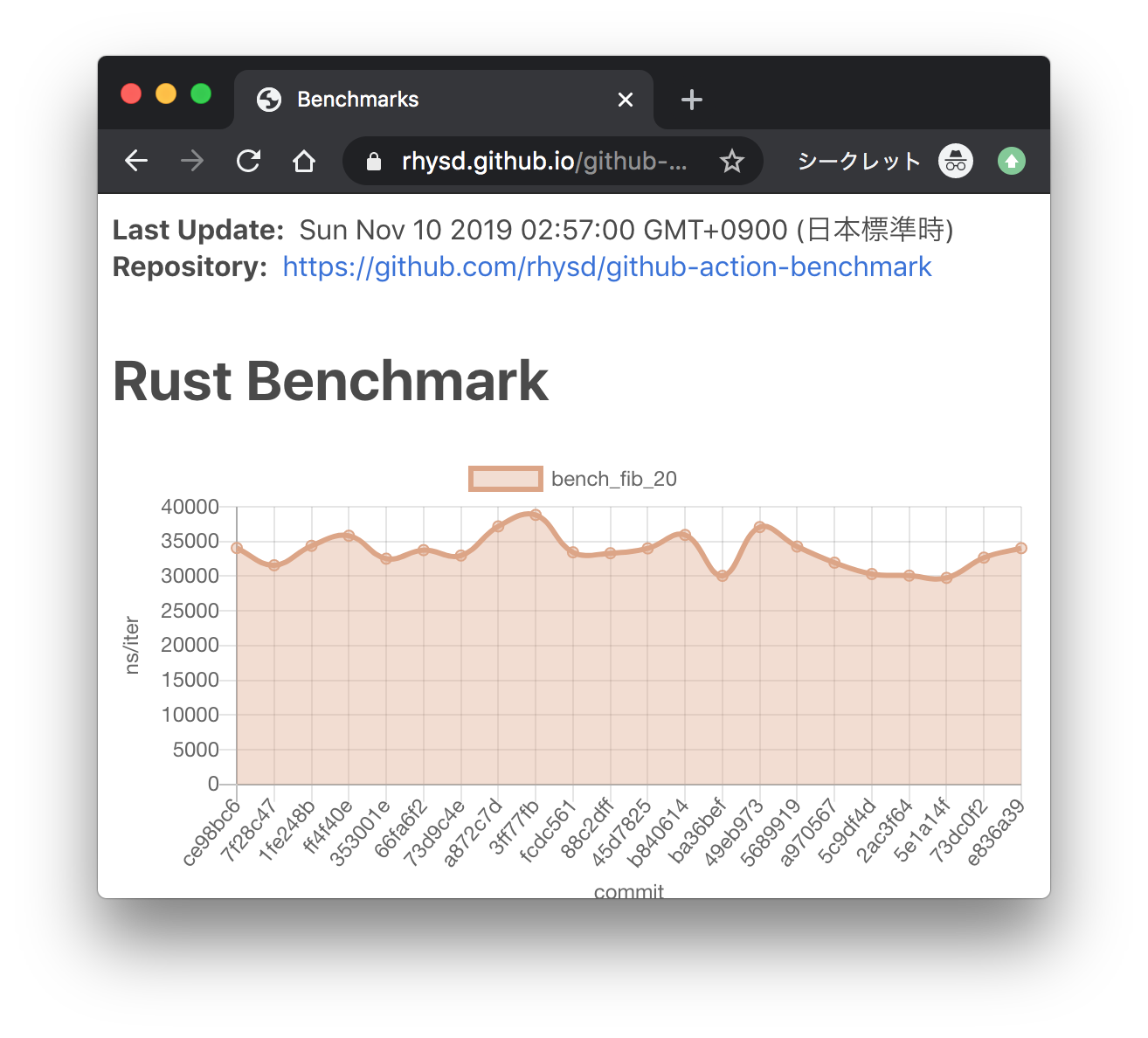

Here we add the ability to save subsystem benchmark results in JSON format to display them as graphs To draw graphs, CI team will use [github-action-benchmark](https://github.com/benchmark-action/github-action-benchmark). Since we are using custom benchmarks, we need to prepare [a specific data type](https://github.com/benchmark-action/github-action-benchmark?tab=readme-ov-file#examples): ``` [ { "name": "CPU Load", "unit": "Percent", "value": 50 } ] ``` Then we'll get graphs like this:  [A live page with graphs](https://benchmark-action.github.io/github-action-benchmark/dev/bench/ ) --------- Co-authored-by:

ordian <[email protected]>

-

Dcompoze authored

**Update:** Pushed additional changes based on the review comments. **This pull request fixes various spelling mistakes in this repository.** Most of the changes are contained in the first **3** commits: - `Fix spelling mistakes in comments and docs` - `Fix spelling mistakes in test names` - `Fix spelling mistakes in error messages, panic messages, logs and tracing` Other source code spelling mistakes are separated into individual commits for easier reviewing: - `Fix the spelling of 'authority'` - `Fix the spelling of 'REASONABLE_HEADERS_IN_JUSTIFICATION_ANCESTRY'` - `Fix the spelling of 'prev_enqueud_messages'` - `Fix the spelling of 'endpoint'` - `Fix the spelling of 'children'` - `Fix the spelling of 'PenpalSiblingSovereignAccount'` - `Fix the spelling of 'PenpalSudoAccount'` - `Fix the spelling of 'insufficient'` - `Fix the spelling of 'PalletXcmExtrinsicsBenchmark'` - `Fix the spelling of 'subtracted'` - `Fix the spelling of 'CandidatePendingAvailability'` - `Fix the spelling of 'exclusive'` - `Fix the spelling of 'until'` - `Fix the spelling of 'discriminator'` - `Fix the spelling of 'nonexistent'` - `Fix the spelling of 'subsystem'` - `Fix the spelling of 'indices'` - `Fix the spelling of 'committed'` - `Fix the spelling of 'topology'` - `Fix the spelling of 'response'` - `Fix the spelling of 'beneficiary'` - `Fix the spelling of 'formatted'` - `Fix the spelling of 'UNKNOWN_PROOF_REQUEST'` - `Fix the spelling of 'succeeded'` - `Fix the spelling of 'reopened'` - `Fix the spelling of 'proposer'` - `Fix the spelling of 'InstantiationNonce'` - `Fix the spelling of 'depositor'` - `Fix the spelling of 'expiration'` - `Fix the spelling of 'phantom'` - `Fix the spelling of 'AggregatedKeyValue'` - `Fix the spelling of 'randomness'` - `Fix the spelling of 'defendant'` - `Fix the spelling of 'AquaticMammal'` - `Fix the spelling of 'transactions'` - `Fix the spelling of 'PassingTracingSubscriber'` - `Fix the spelling of 'TxSignaturePayload'` - `Fix the spelling of 'versioning'` - `Fix the spelling of 'descendant'` - `Fix the spelling of 'overridden'` - `Fix the spelling of 'network'` Let me know if this structure is adequate. **Note:** The usage of the words `Merkle`, `Merkelize`, `Merklization`, `Merkelization`, `Merkleization`, is somewhat inconsistent but I left it as it is. ~~**Note:** In some places the term `Receival` is used to refer to message reception, IMO `Reception` is the correct word here, but I left it as it is.~~ ~~**Note:** In some places the term `Overlayed` is used instead of the more acceptable version `Overlaid` but I also left it as it is.~~ ~~**Note:** In some places the term `Applyable` is used instead of the correct version `Applicable` but I also left it as it is.~~ **Note:** Some usage of British vs American english e.g. `judgement` vs `judgment`, `initialise` vs `initialize`, `optimise` vs `optimize` etc. are both present in different places, but I suppose that's understandable given the number of contributors. ~~**Note:** There is a spelling mistake in `.github/CODEOWNERS` but it triggers errors in CI when I make changes to it, so I left it as it is.~~

-

- Mar 25, 2024

-

-

Andrei Eres authored

Adds availability-write regression tests. The results for the `availability-distribution` subsystem are volatile, so I had to reduce the precision of the test.

-

- Mar 19, 2024

-

-

ordian authored

On top of #3302. We want the validators to upgrade first before we add changes to the collation side to send the new variants, which is why this part is extracted into a separate PR. The detection of when to send the parent head is based on the core assignments at the relay parent of the candidate. We probably want to make it more flexible in the future, but for now, it will work for a simple use case when a para always has multiple cores assigned to it. --------- Signed-off-by:

Matteo Muraca <[email protected]> Signed-off-by:

dependabot[bot] <[email protected]> Co-authored-by:

Matteo Muraca <[email protected]> Co-authored-by:

dependabot[bot] <49699333+dependabot[bot]@users.noreply.github.com> Co-authored-by:

Juan Ignacio Rios <[email protected]> Co-authored-by:

Branislav Kontur <[email protected]> Co-authored-by:

Bastian Köcher <[email protected]>

-

Bastian Köcher authored

Closes: https://github.com/paritytech/polkadot-sdk/issues/3704

-

- Mar 15, 2024

-

-

ordian authored

Fixes #3128. This introduces a new variant for the collation response from the collator that includes the parent head data. For now, collators won't send this new variant. We'll need to change the collator side of the collator protocol to detect all the cores assigned to a para and send the parent head data in the case when it's more than 1 core. - [x] validate approach - [x] check head data hash

-

- Mar 13, 2024

-

-

-

Alexandru Gheorghe authored

This is printed every 10 minutes, I see no reason why it shouldn't be in all the logs, it would give us valuable information about what is going on with node connectivity when validators come-back to us to report issues. Signed-off-by:Alexandru Gheorghe <[email protected]>

-

- Mar 11, 2024

-

-

Andrei Eres authored

Fixes https://github.com/paritytech/polkadot-sdk/issues/3528 ```rust latency: mean_latency_ms = 30 // common sense std_dev = 2.0 // common sense n_validators = 300 // max number of validators, from chain config n_cores = 60 // 300/5 max_validators_per_core = 5 // default min_pov_size = 5120 // max max_pov_size = 5120 // max peer_bandwidth = 44040192 // from the Parity's kusama validators bandwidth = 44040192 // from the Parity's kusama validators connectivity = 90 // we need to be connected to 90-95% of peers ```

-

- Mar 08, 2024

-

-

Alexandru Gheorghe authored

Looking at rococo-asset-hub https://github.com/paritytech/polkadot-sdk/issues/3519 there seems to be a lot of instances where collator did not advertise their collations, while there are multiple problems there, one of it is that we are connecting and disconnecting to our assigned validators every block, because on reconnect_timeout every 4s we call connect_to_validators and that will produce 0 validators when all went well, so set_reseverd_peers called from validator discovery will disconnect all our peers. More details here: https://github.com/paritytech/polkadot-sdk/issues/3519#issuecomment-1972667343 Now, this shouldn't be a problem, but it stacks with an existing bug in our network stack where if disconnect from a peer the peer might not notice it, so it won't detect the reconnect either and it won't send us the necessary view updates, so we won't advertise the collation to it more details here: https://github.com/paritytech/polkadot-sdk/issues/3519#issuecomment-1972958276 To avoid hitting this condition that often, let's keep the peers in the reserved set for the entire duration we are allocated to a backing group. Backing group sizes(1 rococo, 3 kusama, 5 polkadot) are really small, so this shouldn't lead to that many connections. Additionally, the validators would disconnect us any way if we don't advertise anything for 4 blocks. ## TODO - [x] More testing. - [x] Confirm on rococo that this is improving the situation. (It doesn't but just because other things are going wrong there). --------- Signed-off-by:

Alexandru Gheorghe <[email protected]>

-

- Mar 01, 2024

-

-

Andrei Eres authored

### What's been done - `subsystem-bench` has been split into two parts: a cli benchmark runner and a library. - The cli runner is quite simple. It just allows us to run `.yaml` based test sequences. Now it should only be used to run benchmarks during development. - The library is used in the cli runner and in regression tests. Some code is changed to make the library independent of the runner. - Added first regression tests for availability read and write that replicate existing test sequences. ### How we run regression tests - Regression tests are simply rust integration tests without the harnesses. - They should only be compiled under the `subsystem-benchmarks` feature to prevent them from running with other tests. - This doesn't work when running tests with `nextest` in CI, so additional filters have been added to the `nextest` runs. - Each benchmark run takes a different time in the beginning, so we "warm up" the tests until their CPU usage differs by only 1%. - After the warm-up, we run the benchmarks a few more times and compare the average with the exception using a precision. ### What is still wrong? - I haven't managed to set up approval voting tests. The spread of their results is too large and can't be narrowed down in a reasonable amount of time in the warm-up phase. - The tests start an unconfigurable prometheus endpoint inside, which causes errors because they use the same 9999 port. I disable it with a flag, but I think it's better to extract the endpoint launching outside the test, as we already do with `valgrind` and `pyroscope`. But we still use `prometheus` inside the tests. ### Future work * https://github.com/paritytech/polkadot-sdk/issues/3528 * https://github.com/paritytech/polkadot-sdk/issues/3529 * https://github.com/paritytech/polkadot-sdk/issues/3530 * https://github.com/paritytech/polkadot-sdk/issues/3531 --------- Co-authored-by:

Alexander Samusev <[email protected]>

-

- Feb 26, 2024

-

-

Alexandru Gheorghe authored

Add more debug logs to understand if statement-distribution is in a bad state, should be useful for debugging https://github.com/paritytech/polkadot-sdk/issues/3314 on production networks. Additionally, increase the number of parallel requests should make, since I notice that requests take around 100ms on kusama, and the 5 parallel request was picked mostly random, no reason why we can do more than that. --------- Signed-off-by:

Alexandru Gheorghe <[email protected]> Co-authored-by:

ordian <[email protected]>

-

- Feb 20, 2024

-

-

Oliver Tale-Yazdi authored

Lifting some more dependencies to the workspace. Just using the most-often updated ones for now. It can be reproduced locally. ```sh # First you can check if there would be semver incompatible bumps (looks good in this case): $ zepter transpose dependency lift-to-workspace --ignore-errors syn quote thiserror "regex:^serde.*" # Then apply the changes: $ zepter transpose dependency lift-to-workspace --version-resolver=highest syn quote thiserror "regex:^serde.*" --fix # And format the changes: $ taplo format --config .config/taplo.toml ``` --------- Signed-off-by:Oliver Tale-Yazdi <[email protected]>

-

- Feb 19, 2024

-

-

Alexandru Gheorghe authored

~The previous fix was actually incomplete because we update the authorties only on the situation where we decided to reconnect because we had a low connectivity issue. Now the problem is that update_authority_ids use the list of connected peers, so on restart that does contain anything, so calling immediately after issue_connection_request won't detect all authorities, so we need to also check every block as the comment said, but that did not match the code.~ Actually the fix was correct the flow is follow if more than 1/3 of the authorities can not be resolved we set last_failure and call `ConnectToResolvedValidators`. We will call UpdateAuthorities for all the authorities already connected and for which we already know the address and for the ones that will connect later on `PeerConnected` will have the AuthorityId field set, because it is already known, so approval-distribution will update its cache topology. --------- Signed-off-by:

Alexandru Gheorghe <[email protected]> Co-authored-by:

Alexander Samusev <[email protected]>

-

- Feb 12, 2024

-

-

Oliver Tale-Yazdi authored

Changes (partial https://github.com/paritytech/polkadot-sdk/issues/994): - Set log to `0.4.20` everywhere - Lift `log` to the workspace Starting with a simpler one after seeing https://github.com/paritytech/polkadot-sdk/pull/2065 from @jsdw . This sets the `default-features` to `false` in the root and then overwrites that in each create to its original value. This is necessary since otherwise the `default` features are additive and its impossible to disable them in the crate again once they are enabled in the workspace. I am using a tool to do this, so its mostly a test to see that it works as expected. --------- Signed-off-by:

Oliver Tale-Yazdi <[email protected]>

-

Alexandru Gheorghe authored

On grid distribution messages have two paths of reaching a node, so there is the possiblity of a race when two peers send each other the same statement around the same time. Statement local_knowledge will tell us that the peer should have not send the statement because we sent it to it. Fix it by also keeping track only of the statement we received from a given peer and penalize it only if it sends it to us more than once. Fixes: https://github.com/paritytech/polkadot-sdk/issues/2346 Additionally, also use different Cost labels for different paths to make it easier to debug things. --------- Signed-off-by:

Alexandru Gheorghe <[email protected]>

-

- Jan 31, 2024

-

-

Alexandru Gheorghe authored

Signed-off-by:Alexandru Gheorghe <[email protected]>

-

- Jan 30, 2024

-

-

Andrei Sandu authored

fixes #675 --------- Signed-off-by:Andrei Sandu <[email protected]>

-

- Jan 29, 2024

-

-

Alexandru Gheorghe authored

Topology is coming only at the beginning of each session, so we might lose it if prospective parachains was not enabled at the begining of the session, so cache it for later use. Fixes: https://github.com/paritytech/polkadot-sdk/issues/3058 --------- Signed-off-by:

Alexandru Gheorghe <[email protected]>

-

- Jan 26, 2024

-

-

Liam Aharon authored

Related https://github.com/paritytech/polkadot-sdk/issues/3032 --- Using https://github.com/liamaharon/cargo-workspace-version-tools/ `cargo run -- sync --path ../polkadot-sdk` --------- Signed-off-by:

Oliver Tale-Yazdi <[email protected]> Co-authored-by:

Oliver Tale-Yazdi <[email protected]>

-

- Jan 25, 2024

-

-

Alexandru Gheorghe authored

Fixes: https://github.com/paritytech/polkadot-sdk/issues/2138. Especially on restart AuthorithyDiscovery cache is not populated so we create an invalid topology and messages won't be routed correctly for the entire session. This PR proposes to try to fix this by updating the topology as soon as we now the Authority/PeerId mapping, that should impact the situation dramatically. [This issue was hit yesterday](https://grafana.teleport.parity.io/goto/o9q2625Sg?orgId=1 ), on Westend and resulted in stalling the finality. # TODO - [x] Unit tests - [x] Test impact on versi --------- Signed-off-by:

Alexandru Gheorghe <[email protected]>

-

- Jan 24, 2024

-

-

asynchronous rob authored

https://github.com/paritytech/polkadot-sdk/pull/3042/files#r1465115145 --------- Co-authored-by: command-bot <>

-

Bastian Köcher authored

As activation can fail, we ensure that we don't miss deactivation of leaves.

-

- Jan 23, 2024

-

-

Andrei Sandu authored

Found the issue while investigating the recent finality stall on Westend after upgrading to 1.6.0. Approval distribution aggression is supposed to trade off bandwidth and re-send assignemnts/approvals until enough approvals are be received by at least 2/3 validators. This is supposed to be a catch all mechanism when network connectivity goes south or many validators reboot at the same time. This fix ensures that we always resend approvals starting with the first unfinalized block even in the case when it appears approved from the node's perspective. TODO: - [x] Versi test --------- Signed-off-by:Andrei Sandu <[email protected]>

-

- Jan 22, 2024

-

-

Davide Galassi authored

Step towards https://github.com/paritytech/polkadot-sdk/issues/1975 As reported https://github.com/paritytech/polkadot-sdk/issues/1975#issuecomment-1774534225 I'd like to encapsulate crypto related stuff in a dedicated folder. Currently all cryptographic primitive wrappers are all sparsed in `substrate/core` which contains "misc core" stuff. To simplify the process, as the first step with this PR I propose to move the cryptographic hashing there. The `substrate/crypto` folder was already created to contains `ec-utils` crate. Notes: - rename `sp-core-hashing` to `sp-crypto-hashing` - rename `sp-core-hashing-proc-macro` to `sp-crypto-hashing-proc-macro` - As the crates name is changed I took the freedom to restart fresh from version 0.1.0 for both crates --------- Co-authored-by:

Robert Hambrock <[email protected]>

-

- Jan 18, 2024

-

-

Andrei Sandu authored

This is not actually an error of the node, but an issue with the incoming assignment. --------- Signed-off-by:Andrei Sandu <[email protected]>

-

- Jan 12, 2024

-

-

dependabot[bot] authored

Bumps [indexmap](https://github.com/bluss/indexmap) from 1.9.3 to 2.0.0. <details> <summary>Changelog</summary> <p><em>Sourced from <a href="https://github.com/bluss/indexmap/blob/master/RELEASES.md">indexmap's changelog</a>.</em></p> <blockquote> <ul> <li> <p>2.0.0</p> <ul> <li> <p><strong>MSRV</strong>: Rust 1.64.0 or later is now required.</p> </li> <li> <p>The <code>"std"</code> feature is no longer auto-detected. It is included in the default feature set, or else can be enabled like any other Cargo feature.</p> </li> <li> <p>The <code>"serde-1"</code> feature has been removed, leaving just the optional <code>"serde"</code> dependency to be enabled like a feature itself.</p> </li> <li> <p><code>IndexMap::get_index_mut</code> now returns <code>Option<(&K, &mut V)></code>, changing the key part from <code>&mut K</code> to <code>&K</code>. There is also a new alternative <code>MutableKeys::get_index_mut2</code> to access the former behavior.</p> </li> <li> <p>The new <code>map::Slice<K, V></code> and <code>set::Slice<T></code> offer a linear view of maps and sets, behaving a lot like normal <code>[(K, V)]</code> and <code>[T]</code> slices. Notably, comparison traits like <code>Eq</code> only consider items in order, rather than hash lookups, and slices even implement <code>Hash</code>.</p> </li> <li> <p><code>IndexMap</code> and <code>IndexSet</code> now have <code>sort_by_cached_key</code> and <code>par_sort_by_cached_key</code> methods which perform stable sorts in place using a key extraction function.</p> </li> <li> <p><code>IndexMap</code> and <code>IndexSet</code> now have <code>reserve_exact</code>, <code>try_reserve</code>, and <code>try_reserve_exact</code> methods that correspond to the same methods on <code>Vec</code>. However, exactness only applies to the direct capacity for items, while the raw hash table still follows its own rules for capacity and load factor.</p> </li> <li> <p>The <code>Equivalent</code> trait is now re-exported from the <code>equivalent</code> crate, intended as a common base to allow types to work with multiple map types.</p> </li> <li> <p>The <code>hashbrown</code> dependency has been updated to version 0.14.</p> </li> <li> <p>The <code>serde_seq</code> module has been moved from the crate root to below the <code>map</code> module.</p> </li> </ul> </li> </ul> </blockquote> </details> <details> <summary>Commits</summary> <ul> <li><a href="https://github.com/bluss/indexmap/commit/8e47be81a10bcb4b203955d6f6eb05bf85630c20"><code>8e47be8</code></a> Merge pull request <a href="https://redirect.github.com/bluss/indexmap/issues/267">#267</a> from cuviper/release-2.0.0</li> <li><a href="https://github.com/bluss/indexmap/commit/ad694fbb8c73f92e4316e436422dc80763a7ed03"><code>ad694fb</code></a> Release 2.0.0</li> <li><a href="https://github.com/bluss/indexmap/commit/b5b2814999255f82559f172d00d1382ae66d23c1"><code>b5b2814</code></a> Merge pull request <a href="https://redirect.github.com/bluss/indexmap/issues/266">#266</a> from cuviper/doc-capacity</li> <li><a href="https://github.com/bluss/indexmap/commit/d3ea28992194484dea671a19b808b62ed8efd5d1"><code>d3ea289</code></a> Document the lower-bound semantics of capacity</li> <li><a href="https://github.com/bluss/indexmap/commit/74e14dac622eac69fa292b100a51c5a385a81d61"><code>74e14da</code></a> Merge pull request <a href="https://redirect.github.com/bluss/indexmap/issues/264">#264</a> from cuviper/equivalent</li> <li><a href="https://github.com/bluss/indexmap/commit/677c60522815f53e83ab173c199772567e9c9007"><code>677c605</code></a> Add a relnote for Equivalent</li> <li><a href="https://github.com/bluss/indexmap/commit/6d83bc1902b95758d98ea973778d8fc4b4a599a2"><code>6d83bc1</code></a> pub use equivalent::Equivalent;</li> <li><a href="https://github.com/bluss/indexmap/commit/bb48357fee6494a335963072ea8c51ed30903a19"><code>bb48357</code></a> Merge pull request <a href="https://redirect.github.com/bluss/indexmap/issues/263">#263</a> from cuviper/insert_in_slot</li> <li><a href="https://github.com/bluss/indexmap/commit/c37dae6bcb2fc2c1f45b1e6fd924f92685acd8b3"><code>c37dae6</code></a> Use hashbrown's new single-lookup insertion</li> <li><a href="https://github.com/bluss/indexmap/commit/ee71507aaacf25b43f99350af44c137e6af65a7c"><code>ee71507</code></a> Merge pull request <a href="https://redirect.github.com/bluss/indexmap/issues/262">#262</a> from daxpedda/hashbrown-v0.14</li> <li>Additional commits viewable in <a href="https://github.com/bluss/indexmap/compare/1.9.3...2.0.0">compare view</a></li> </ul> </details> <br /> [](https://docs.github.com/en/github/managing-security-vulnerabilities/about-dependabot-security-updates#about-compatibility-scores ) Dependabot will resolve any conflicts with this PR as long as you don't alter it yourself. You can also trigger a rebase manually by commenting `@dependabot rebase`. [//]: # (dependabot-automerge-start) [//]: # (dependabot-automerge-end) --- <details> <summary>Dependabot commands and options</summary> <br /> You can trigger Dependabot actions by commenting on this PR: - `@dependabot rebase` will rebase this PR - `@dependabot recreate` will recreate this PR, overwriting any edits that have been made to it - `@dependabot merge` will merge this PR after your CI passes on it - `@dependabot squash and merge` will squash and merge this PR after your CI passes on it - `@dependabot cancel merge` will cancel a previously requested merge and block automerging - `@dependabot reopen` will reopen this PR if it is closed - `@dependabot close` will close this PR and stop Dependabot recreating it. You can achieve the same result by closing it manually - `@dependabot show <dependency name> ignore conditions` will show all of the ignore conditions of the specified dependency - `@dependabot ignore <dependency name> major version` will close this group update PR and stop Dependabot creating any more for the specific dependency's major version (unless you unignore this specific dependency's major version or upgrade to it yourself) - `@dependabot ignore <dependency name> minor version` will close this group update PR and stop Dependabot creating any more for the specific dependency's minor version (unless you unignore this specific dependency's minor version or upgrade to it yourself) - `@dependabot ignore <dependency name>` will close this group update PR and stop Dependabot creating any more for the specific dependency (unless you unignore this specific dependency or upgrade to it yourself) - `@dependabot unignore <dependency name>` will remove all of the ignore conditions of the specified dependency - `@dependabot unignore <dependency name> <ignore condition>` will remove the ignore condition of the specified dependency and ignore conditions </details> Signed-off-by:

dependabot[bot] <[email protected]> Co-authored-by:

dependabot[bot] <49699333+dependabot[bot]@users.noreply.github.com>

-

- Jan 10, 2024

-

-

Alin Dima authored

Previously, it was only possible to retry the same request on a different protocol name that had the exact same binary payloads. Introduce a way of trying a different request on a different protocol if the first one fails with Unsupported protocol. This helps with adding new req-response versions in polkadot while preserving compatibility with unupgraded nodes. The way req-response protocols were bumped previously was that they were bundled with some other notifications protocol upgrade, like for async backing (but that is more complicated, especially if the feature does not require any changes to a notifications protocol). Will be needed for implementing https://github.com/polkadot-fellows/RFCs/pull/47 TODO: - [x] add tests - [x] add guidance docs in polkadot about req-response protocol versioning

-

dependabot[bot] authored

Bumps [parking_lot](https://github.com/Amanieu/parking_lot) from 0.11.2 to 0.12.1. <details> <summary>Changelog</summary> <p><em>Sourced from <a href="https://github.com/Amanieu/parking_lot/blob/master/CHANGELOG.md">parking_lot's changelog</a>.</em></p> <blockquote> <h2>parking_lot 0.12.1 (2022-05-31)</h2> <ul> <li>Fixed incorrect memory ordering in <code>RwLock</code>. (<a href="https://redirect.github.com/Amanieu/parking_lot/issues/344">#344</a>)</li> <li>Added <code>Condvar::wait_while</code> convenience methods (<a href="https://redirect.github.com/Amanieu/parking_lot/issues/343">#343</a>)</li> </ul> <h2>parking_lot_core 0.9.3 (2022-04-30)</h2> <ul> <li>Bump windows-sys dependency to 0.36. (<a href="https://redirect.github.com/Amanieu/parking_lot/issues/339">#339</a>)</li> </ul> <h2>parking_lot_core 0.9.2, lock_api 0.4.7 (2022-03-25)</h2> <ul> <li>Enable const new() on lock types on stable. (<a href="https://redirect.github.com/Amanieu/parking_lot/issues/325">#325</a>)</li> <li>Added <code>MutexGuard::leak</code> function. (<a href="https://redirect.github.com/Amanieu/parking_lot/issues/333">#333</a>)</li> <li>Bump windows-sys dependency to 0.34. (<a href="https://redirect.github.com/Amanieu/parking_lot/issues/331">#331</a>)</li> <li>Bump petgraph dependency to 0.6. (<a href="https://redirect.github.com/Amanieu/parking_lot/issues/326">#326</a>)</li> <li>Don't use pthread attributes on the espidf platform. (<a href="https://redirect.github.com/Amanieu/parking_lot/issues/319">#319</a>)</li> </ul> <h2>parking_lot_core 0.9.1 (2022-02-06)</h2> <ul> <li>Bump windows-sys dependency to 0.32. (<a href="https://redirect.github.com/Amanieu/parking_lot/issues/316">#316</a>)</li> </ul> <h2>parking_lot 0.12.0, parking_lot_core 0.9.0, lock_api 0.4.6 (2022-01-28)</h2> <ul> <li>The MSRV is bumped to 1.49.0.</li> <li>Disabled eventual fairness on wasm32-unknown-unknown. (<a href="https://redirect.github.com/Amanieu/parking_lot/issues/302">#302</a>)</li> <li>Added a rwlock method to report if lock is held exclusively. (<a href="https://redirect.github.com/Amanieu/parking_lot/issues/303">#303</a>)</li> <li>Use new <code>asm!</code> macro. (<a href="https://redirect.github.com/Amanieu/parking_lot/issues/304">#304</a>)</li> <li>Use windows-rs instead of winapi for faster builds. (<a href="https://redirect.github.com/Amanieu/parking_lot/issues/311">#311</a>)</li> <li>Moved hardware lock elision support to a separate Cargo feature. (<a href="https://redirect.github.com/Amanieu/parking_lot/issues/313">#313</a>)</li> <li>Removed used of deprecated <code>spin_loop_hint</code>. (<a href="https://redirect.github.com/Amanieu/parking_lot/issues/314">#314</a>)</li> </ul> </blockquote> </details> <details> <summary>Commits</summary> <ul> <li><a href="https://github.com/Amanieu/parking_lot/commit/336a9b31ff385728d00eb7ef173e4d054584b787"><code>336a9b3</code></a> Release parking_lot 0.12.1</li> <li><a href="https://github.com/Amanieu/parking_lot/commit/b69a0547ce6c92b164528c65daafa48e8f1c08bb"><code>b69a054</code></a> Merge pull request <a href="https://redirect.github.com/Amanieu/parking_lot/issues/343">#343</a> from bryanhitc/master</li> <li><a href="https://github.com/Amanieu/parking_lot/commit/3fe7233ee05fbae9bc94a831d4e66df0257880a2"><code>3fe7233</code></a> Merge pull request <a href="https://redirect.github.com/Amanieu/parking_lot/issues/344">#344</a> from Amanieu/fix_rwlock_ordering</li> <li><a href="https://github.com/Amanieu/parking_lot/commit/ef12b00daf0dbc6dd025098ec2cd0517bb9f737c"><code>ef12b00</code></a> small test update</li> <li><a href="https://github.com/Amanieu/parking_lot/commit/fdb063cd4e33d9c44cc08aea0c341ffec7e7b282"><code>fdb063c</code></a> wait_while can't timeout fix</li> <li><a href="https://github.com/Amanieu/parking_lot/commit/26e19dced4ae01000abc0dfca251f56695b1b8aa"><code>26e19dc</code></a> Remove WaitWhileResult</li> <li><a href="https://github.com/Amanieu/parking_lot/commit/d26c284fe8413bdeeed3ef4f70e23b5919a9ffeb"><code>d26c284</code></a> Fix incorrect memory ordering in RwLock</li> <li><a href="https://github.com/Amanieu/parking_lot/commit/045828381a5facff71b1aad3c01af15801959458"><code>0458283</code></a> Use saturating_add for WaitWhileResult</li> <li><a href="https://github.com/Amanieu/parking_lot/commit/686db4755913947219f83a04b21885032685d246"><code>686db47</code></a> Add Condvar::wait_while convenience methods</li> <li><a href="https://github.com/Amanieu/parking_lot/commit/6f6e021ced6f67fef8002442aa97c4a08ed5c3e4"><code>6f6e021</code></a> Merge pull request <a href="https://redirect.github.com/Amanieu/parking_lot/issues/342">#342</a> from MarijnS95/patch-1</li> <li>Additional commits viewable in <a href="https://github.com/Amanieu/parking_lot/compare/0.11.2...0.12.1">compare view</a></li> </ul> </details> <br /> [](https://docs.github.com/en/github/managing-security-vulnerabilities/about-dependabot-security-updates#about-compatibility-scores ) Dependabot will resolve any conflicts with this PR as long as you don't alter it yourself. You can also trigger a rebase manually by commenting `@dependabot rebase`. [//]: # (dependabot-automerge-start) [//]: # (dependabot-automerge-end) --- <details> <summary>Dependabot commands and options</summary> <br /> You can trigger Dependabot actions by commenting on this PR: - `@dependabot rebase` will rebase this PR - `@dependabot recreate` will recreate this PR, overwriting any edits that have been made to it - `@dependabot merge` will merge this PR after your CI passes on it - `@dependabot squash and merge` will squash and merge this PR after your CI passes on it - `@dependabot cancel merge` will cancel a previously requested merge and block automerging - `@dependabot reopen` will reopen this PR if it is closed - `@dependabot close` will close this PR and stop Dependabot recreating it. You can achieve the same result by closing it manually - `@dependabot show <dependency name> ignore conditions` will show all of the ignore conditions of the specified dependency - `@dependabot ignore <dependency name> major version` will close this group update PR and stop Dependabot creating any more for the specific dependency's major version (unless you unignore this specific dependency's major version or upgrade to it yourself) - `@dependabot ignore <dependency name> minor version` will close this group update PR and stop Dependabot creating any more for the specific dependency's minor version (unless you unignore this specific dependency's minor version or upgrade to it yourself) - `@dependabot ignore <dependency name>` will close this group update PR and stop Dependabot creating any more for the specific dependency (unless you unignore this specific dependency or upgrade to it yourself) - `@dependabot unignore <dependency name>` will remove all of the ignore conditions of the specified dependency - `@dependabot unignore <dependency name> <ignore condition>` will remove the ignore condition of the specified dependency and ignore conditions </details> Signed-off-by:

dependabot[bot] <[email protected]> Co-authored-by:

dependabot[bot] <49699333+dependabot[bot]@users.noreply.github.com>

-

ordian authored

Closes #1591. The purpose of this PR is filter out backing statements from the network signed by disabled validators. This is just an optimization, since we will do filtering in the runtime in #1863 to avoid nodes to filter garbage out at block production time. - [x] Ensure it's ok to fiddle with the mask of manifests - [x] Write more unit tests - [x] Test locally - [x] simple zombienet test - [x] PRDoc --------- Co-authored-by:Tsvetomir Dimitrov <[email protected]>

-

- Dec 19, 2023

-

-

André Silva authored

-

- Dec 18, 2023

-

-

Branislav Kontur authored

This PR contains just a few clippy fixes and nits, which are, however, relaxed by workspace clippy settings here: https://github.com/paritytech/polkadot-sdk/blob/master/Cargo.toml#L483-L506 --------- Co-authored-by:

Dmitry Sinyavin <[email protected]> Co-authored-by:

ordian <[email protected]> Co-authored-by: command-bot <> Co-authored-by:

Bastian Köcher <[email protected]>

-

dependabot[bot] authored

Bumps [async-trait](https://github.com/dtolnay/async-trait) from 0.1.73 to 0.1.74. <details> <summary>Release notes</summary> <p><em>Sourced from <a href="https://github.com/dtolnay/async-trait/releases">async-trait's releases</a>.</em></p> <blockquote> <h2>0.1.74</h2> <ul> <li>Documentation improvements</li> </ul> </blockquote> </details> <details> <summary>Commits</summary> <ul> <li><a href="https://github.com/dtolnay/async-trait/commit/265979b07a9af573e1edd3b2a9b179533cfa7a6c"><code>265979b</code></a> Release 0.1.74</li> <li><a href="https://github.com/dtolnay/async-trait/commit/5e677097d2e67f7a5c5e3023e2f3b99b36a9e132"><code>5e67709</code></a> Fix doc test when async fn in trait is natively supported</li> <li><a href="https://github.com/dtolnay/async-trait/commit/ef144aed28b636eb65759505b2323afc4c753fbe"><code>ef144ae</code></a> Update ui test suite to nightly-2023-10-15</li> <li><a href="https://github.com/dtolnay/async-trait/commit/9398a28d6fc977ccf8c286bd85b4b87a883f92ac"><code>9398a28</code></a> Test docs.rs documentation build in CI</li> <li><a href="https://github.com/dtolnay/async-trait/commit/8737173dafa371e5e9e491d736513be1baf697f4"><code>8737173</code></a> Update ui test suite to nightly-2023-09-24</li> <li><a href="https://github.com/dtolnay/async-trait/commit/5ba643c001a55f70c4a44690e040cdfab873ba56"><code>5ba643c</code></a> Test dyn Trait containing async fn</li> <li><a href="https://github.com/dtolnay/async-trait/commit/247c8e7b0b3ff69c9518ebf93e69fe74d47f17b6"><code>247c8e7</code></a> Add ui test testing the recommendation to use async-trait</li> <li><a href="https://github.com/dtolnay/async-trait/commit/799db66a84834c403860df4a8c0227d8fb7f9d9d"><code>799db66</code></a> Update ui test suite to nightly-2023-09-23</li> <li><a href="https://github.com/dtolnay/async-trait/commit/0e60248011f751d8ccf58219d0a79aacfe9619f1"><code>0e60248</code></a> Update actions/checkout@v3 -> v4</li> <li><a href="https://github.com/dtolnay/async-trait/commit/7fcbc83993d5ef483d048c271a7f6c4ac8c98388"><code>7fcbc83</code></a> Update ui test suite to nightly-2023-08-29</li> <li>See full diff in <a href="https://github.com/dtolnay/async-trait/compare/0.1.73...0.1.74">compare view</a></li> </ul> </details> <br /> [](https://docs.github.com/en/github/managing-security-vulnerabilities/about-dependabot-security-updates#about-compatibility-scores ) Dependabot will resolve any conflicts with this PR as long as you don't alter it yourself. You can also trigger a rebase manually by commenting `@dependabot rebase`. [//]: # (dependabot-automerge-start) [//]: # (dependabot-automerge-end) --- <details> <summary>Dependabot commands and options</summary> <br /> You can trigger Dependabot actions by commenting on this PR: - `@dependabot rebase` will rebase this PR - `@dependabot recreate` will recreate this PR, overwriting any edits that have been made to it - `@dependabot merge` will merge this PR after your CI passes on it - `@dependabot squash and merge` will squash and merge this PR after your CI passes on it - `@dependabot cancel merge` will cancel a previously requested merge and block automerging - `@dependabot reopen` will reopen this PR if it is closed - `@dependabot close` will close this PR and stop Dependabot recreating it. You can achieve the same result by closing it manually - `@dependabot show <dependency name> ignore conditions` will show all of the ignore conditions of the specified dependency - `@dependabot ignore <dependency name> major version` will close this group update PR and stop Dependabot creating any more for the specific dependency's major version (unless you unignore this specific dependency's major version or upgrade to it yourself) - `@dependabot ignore <dependency name> minor version` will close this group update PR and stop Dependabot creating any more for the specific dependency's minor version (unless you unignore this specific dependency's minor version or upgrade to it yourself) - `@dependabot ignore <dependency name>` will close this group update PR and stop Dependabot creating any more for the specific dependency (unless you unignore this specific dependency or upgrade to it yourself) - `@dependabot unignore <dependency name>` will remove all of the ignore conditions of the specified dependency - `@dependabot unignore <dependency name> <ignore condition>` will remove the ignore condition of the specified dependency and ignore conditions </details> Signed-off-by:

dependabot[bot] <[email protected]> Co-authored-by:

dependabot[bot] <49699333+dependabot[bot]@users.noreply.github.com>

-

- Dec 14, 2023

-

-

Andrei Sandu authored

This tool makes it easy to run parachain consensus stress/performance testing on your development machine or in CI. ## Motivation The parachain consensus node implementation spans across many modules which we call subsystems. Each subsystem is responsible for a small part of logic of the parachain consensus pipeline, but in general the most load and performance issues are localized in just a few core subsystems like `availability-recovery`, `approval-voting` or `dispute-coordinator`. In the absence of such a tool, we would run large test nets to load/stress test these parts of the system. Setting up and making sense of the amount of data produced by such a large test is very expensive, hard to orchestrate and is a huge development time sink. ## PR contents - CLI tool - Data Availability Read test - reusable mockups and components needed so far - Documentation on how to get started ### Data Availability Read test An overseer is built with using a real `availability-recovery` susbsytem instance while dependent subsystems like `av-store`, `network-bridge` and `runtime-api` are mocked. The network bridge will emulate all the network peers and their answering to requests. The test is going to be run for a number of blocks. For each block it will generate send a “RecoverAvailableData” request for an arbitrary number of candidates. We wait for the subsystem to respond to all requests before moving to the next block. At the same time we collect the usual subsystem metrics and task CPU metrics and show some nice progress reports while running. ### Here is how the CLI looks like: ``` [2023-11-28T13:06:27Z INFO subsystem_bench::core::display] n_validators = 1000, n_cores = 20, pov_size = 5120 - 5120, error = 3, latency = Some(PeerLatency { min_latency: 1ms, max_latency: 100ms }) [2023-11-28T13:06:27Z INFO subsystem-bench::availability] Generating template candidate index=0 pov_size=5242880 [2023-11-28T13:06:27Z INFO subsystem-bench::availability] Created test environment. [2023-11-28T13:06:27Z INFO subsystem-bench::availability] Pre-generating 60 candidates. [2023-11-28T13:06:30Z INFO subsystem-bench::core] Initializing network emulation for 1000 peers. [2023-11-28T13:06:30Z INFO subsystem-bench::availability] Current block 1/3 [2023-11-28T13:06:30Z INFO substrate_prometheus_endpoint]〽 ️ Prometheus exporter started at 127.0.0.1:9999 [2023-11-28T13:06:30Z INFO subsystem_bench::availability] 20 recoveries pending [2023-11-28T13:06:37Z INFO subsystem_bench::availability] Block time 6262ms [2023-11-28T13:06:37Z INFO subsystem-bench::availability] Sleeping till end of block (0ms) [2023-11-28T13:06:37Z INFO subsystem-bench::availability] Current block 2/3 [2023-11-28T13:06:37Z INFO subsystem_bench::availability] 20 recoveries pending [2023-11-28T13:06:43Z INFO subsystem_bench::availability] Block time 6369ms [2023-11-28T13:06:43Z INFO subsystem-bench::availability] Sleeping till end of block (0ms) [2023-11-28T13:06:43Z INFO subsystem-bench::availability] Current block 3/3 [2023-11-28T13:06:43Z INFO subsystem_bench::availability] 20 recoveries pending [2023-11-28T13:06:49Z INFO subsystem_bench::availability] Block time 6194ms [2023-11-28T13:06:49Z INFO subsystem-bench::availability] Sleeping till end of block (0ms) [2023-11-28T13:06:49Z INFO subsystem_bench::availability] All blocks processed in 18829ms [2023-11-28T13:06:49Z INFO subsystem_bench::availability] Throughput: 102400 KiB/block [2023-11-28T13:06:49Z INFO subsystem_bench::availability] Block time: 6276 ms [2023-11-28T13:06:49Z INFO subsystem_bench::availability] Total received from network: 415 MiB Total sent to network: 724 KiB Total subsystem CPU usage 24.00s CPU usage per block 8.00s Total test environment CPU usage 0.15s CPU usage per block 0.05s ``` ### Prometheus/Grafana stack in action <img width="1246" alt="Screenshot 2023-11-28 at 15 11 10" src="https://github.com/paritytech/polkadot-sdk/assets/54316454/eaa47422-4a5e-4a3a-aaef-14ca644c1574"> <img width="1246" alt="Screenshot 2023-11-28 at 15 12 01" src="https://github.com/paritytech/polkadot-sdk/assets/54316454/237329d6-1710-4c27-8f67-5fb11d7f66ea"> <img width="1246" alt="Screenshot 2023-11-28 at 15 12 38" src="https://github.com/paritytech/polkadot-sdk/assets/54316454/a07119e8-c9f1-4810-a1b3-f1b7b01cf357 "> --------- Signed-off-by:Andrei Sandu <[email protected]>

-